Making Sense of AI in Higher Ed: A Leadership Briefing for an Unsettled Field | The HELIOS Report, March 30, 2026

A practical frame for AI decisions at every level of the institution, built from analysis of recent surveys of presidents, faculty, staff, and students.

Dear Colleagues,

This edition of The HELIOS Report takes up an issue readers may be feeling increasing urgency about — the rapid growth and influence of AI in higher education. You no doubt have many dozens of playbooks, handbooks, checklists, case studies, and argumentative articles filling your inbox on the many questions AI raises about ethical use, academic integrity, equity, institutional transformation, and workforce development.

To help readers of The HELIOS Report reset on this expansive topic, we selected a range of recent surveys of multiple stakeholders with an eye toward identifying common themes that establish what conditions currently exist at many institutions. The surveys we discuss below present a sector in motion but not yet in command.

This executive briefing addresses that gap between awareness and coordinated response. Following a discussion of the cross-cutting themes and implications of the surveys we look at, we argue that individual colleges and universities need a productive near-term methodology for acting in conditions of uncertainty. We propose a set of four diagnostic questions that give campuses a disciplined way to move forward and make decisions. Each question works for either broad strategic conversations or isolated tactical decisions without requiring perfect information to be useful.

We hope this report will help you better understand the situation at your institution and identify what information and ways of thinking you need to move conversations forward productively. We expect this to be the first of several HELIOS Reports on AI, especially as higher education experiences the addition of agentic AI on top of generative AI. This edition tries to give leaders a reasonable way to channel the flood of information and questions they are encountering.

We welcome any feedback you have about this report and look forward to hearing if it is useful to you and your colleagues.

- Ilene and Robert

I. Recent surveys on generative AI in higher ed

Presidents

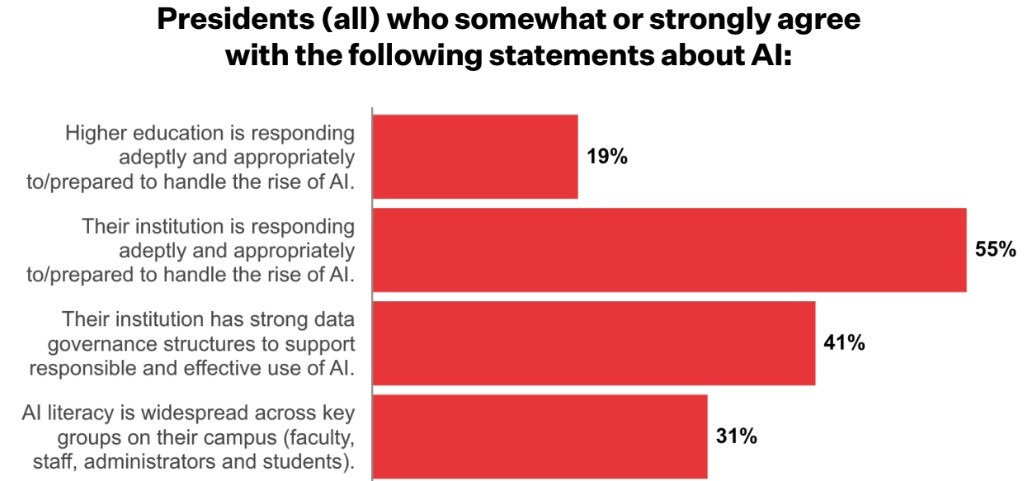

The 2026 Survey of College and University Presidents from Inside Higher Ed (March 10, 2026) collects 430 responses from U.S. presidents across public, private nonprofit, and for-profit institutions. While the survey is not primarily about AI (it covers financial stability, enrollment trends, political pressures, campus culture, student well-being, and technology), it presents a sector lacking adept responses to the challenges of AI.

Source: 2026 Survey of College and University Presidents, Inside Higher Ed

Faculty

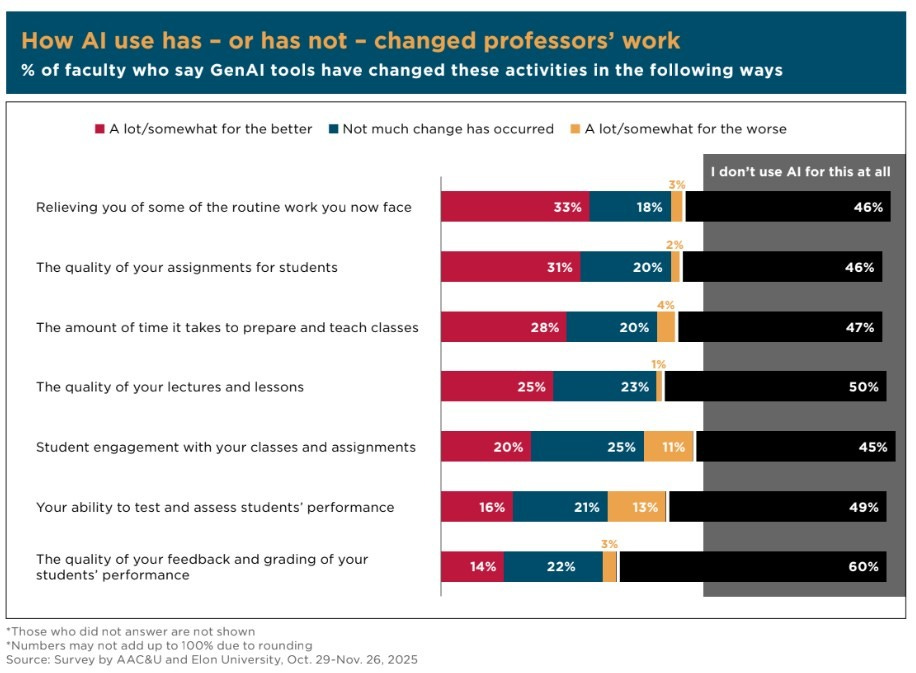

The AI Challenge: How College Faculty Assess the Present and Future of Higher Education in the Age of AI from American Association of Colleges and Universities and Elon University (January 21, 2026) reports on a survey of 1,057 U.S. college faculty to assess how they perceive AI’s impact. Faculty view generative AI as a net risk to student learning and institutional integrity, despite acknowledging limited instructional benefits. The report frames AI as a systemic pressure on teaching, assessment, credentialing, and the definition of learning.

College Faculty Perceptions of Generative Artificial Intelligence in Higher Education, from College Board (February 25, 2026) reports widespread high levels of concern about the effects of AI on critical thinking, academic integrity, and student independence. Most faculty report using AI in their own work, though in limited ways. Policies and instructional approaches vary widely, and most faculty report low confidence in managing AI use.

Source: College Faculty Perceptions of Generative Artificial Intelligence in Higher Education, College Board

Operational administration and staff

AI in Higher Education: From Widespread Adoption to Strategic Integration (March 4, 2026) is the third annual report from the edtech vendor Ellucian. It draws on survey responses across roles including executive leadership, academic affairs, IT, and operations. The report details role-specific adoption, operational use cases, and the need for training, governance, and data infrastructure to support responsible implementation. It describes a sector where AI use among administrators has reached saturation and which is transitioning to institutional adoption and integration. It finds AI use concentrates in chatbots in financial aid, IT, and enrollment functions.

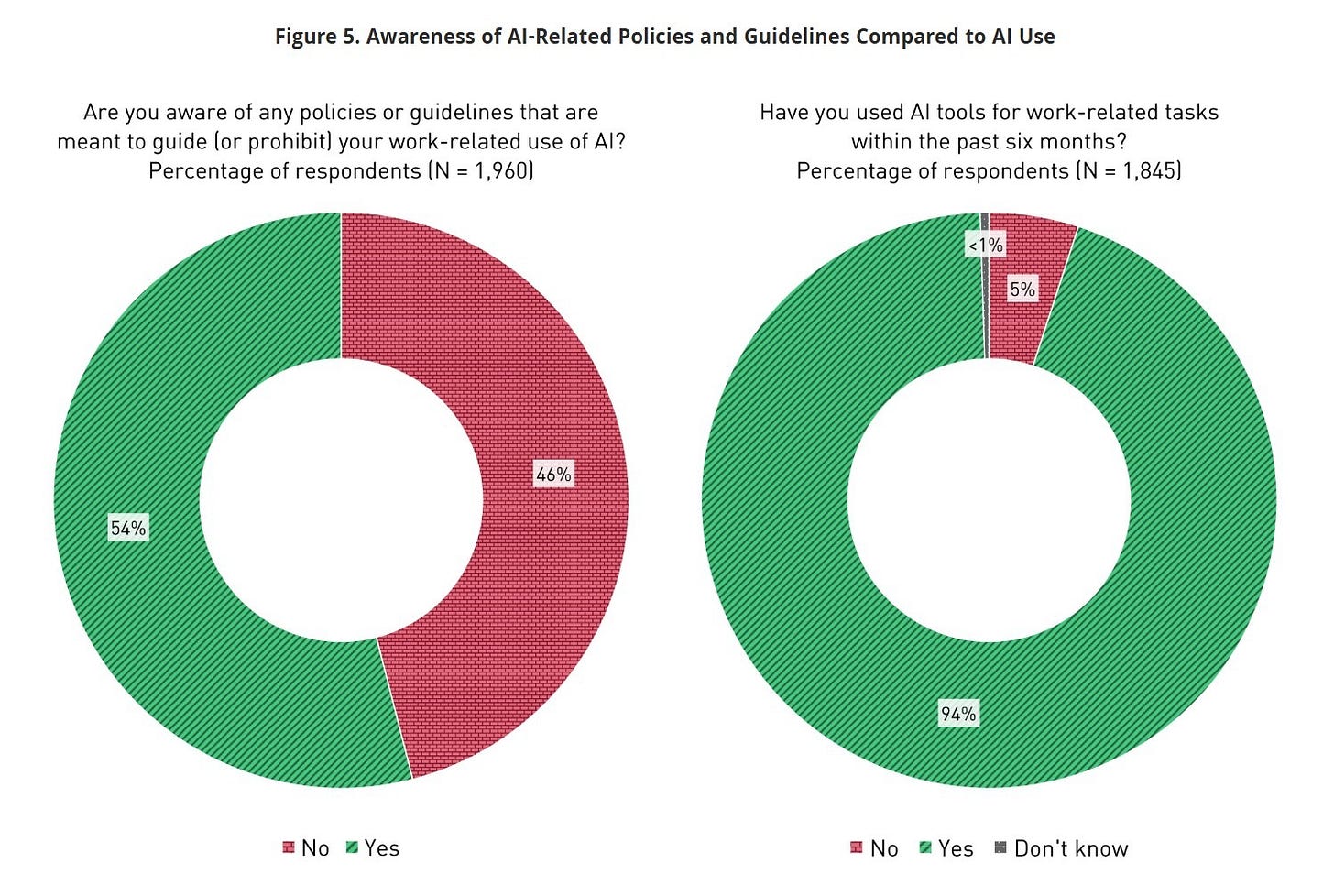

The Impact of AI on Work in Higher Education from EDUCAUSE (January 12, 2026) explores how faculty and staff are using AI and how institutions are responding. It finds that AI adoption is already widespread, largely informal, and outpacing institutional strategy. It documents institutional responses such as upskilling and emerging risks around governance, workload, and ROI.

Source: The Impact of AI on Work in Higher Education, EDUCAUSE

High school students, parents, teachers, and administrators

How Teens Use and View AI from the Pew Research Center (February 24, 2026) reports on a survey of U.S. teenagers examining their awareness, access, and use of generative AI tools. It shows AI use among teens is already widespread, though unevenly distributed, with significant implications for learning behaviors, academic integrity, and digital literacy development.

We also incorporated into our analysis a pair of reports from College Board on surveys administered in 2024 and 2025, including large samples of high school students, parents, AP teachers, principals, and school or district administrators: U.S. High School Students’ Use of Generative Artificial Intelligence: New Evidence from High School Students, Parents, and Educators (October 2025) and Variation in High School Student, Parent, and Teacher Attitudes Toward the Use of Generative Artificial Intelligence (December 11, 2025). Three themes emerge from the reports. First, student use is widespread and increasing, with most students reporting at least occasional use for schoolwork. Second, parents and administrators are broadly supportive of students learning to use AI, while teachers and school leaders express concern about academic integrity, skill development, and preparedness. Third, school and district policies vary substantially in access, rules, and delegation of authority to teachers or departments. The reports describe high school environments where adoption is moving quickly, adult stakeholders recognize both opportunity and risk, and formal policy and instructional practice remain unsettled.

College students

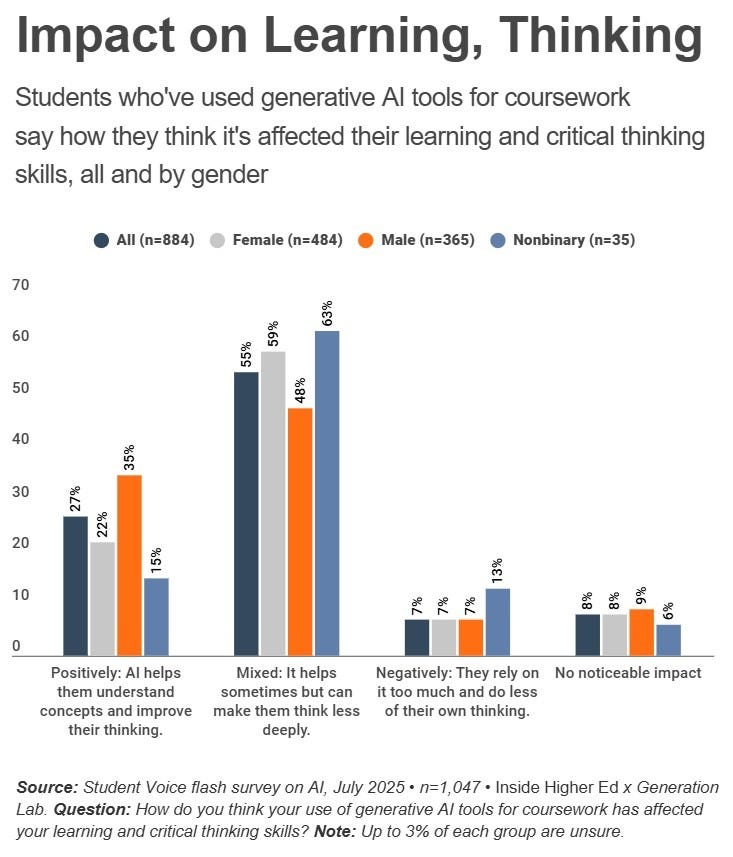

Recent surveys of college students are harder to find at present. How AI Is Changing—Not ‘Killing’—College (August 29, 2025) reports on Inside Higher Ed’s July 2025 Student Voice survey of 1,047 students. It finds AI is widely used and reshaping learning behaviors but it has not reduced the perceived value of college. Students report mixed effects on critical thinking and call for institutional guidance focused on ethical use rather than enforcement. We supplemented our analysis below with two older sources: 1.) Student Research Into How Students and Faculty Use AI published in January 2026 by Every Learner Everywhere (which Robert wrote). It reports on work done by student interns in the 2024-25 academic year to survey their peers and faculty. At that early date AI was normalized for many students, and a key finding is that many students were thinking critically about the influence of AI on their academic development. 2.) Time for Class 2025: Empowering Educators, Engaging Students, Tyton Partners from Tyton Partners (June 11, 2025) surveyed current college students, along with administrators and instructors on a range of issues. Its core finding is that regarding most digital learning in higher ed including AI, policy clarity and data integration lag behind implementation.

II. Cross-survey patterns

AI is nearing universal use by high school and college students. They are building their own norms in the absence of institutional norms. They use AI pragmatically to complete tasks. Their AI use is concentrated in research and writing workflows.

Faculty engagement is high but individualized and highly varied. They are adapting course by course, not through coordinated academic program planning. Faculty are experiencing and grappling with a disruption to how learning is defined and assessed.

Adoption in administrative or operations functions is ahead of academic uses. Operational integration is moving faster than institutional decision making. Staff in advising, enrollment, marketing, and IT report efficiency gains and clearer use cases.

Leadership awareness exists but has not translated into coordinated strategy. Ethical and pedagogical norms are being set informally by users, not institutions. Institutions and their leaders describe themselves as “not ready.” However, individual users behave as if systems and procedures for incorporating AI are already in place.

III. Interpretations

The institutional condition

AI magnifies existing institutional strengths and weaknesses. This applies to organizational capacity, not institutional size. AI pressures will compound the advantages of strong hiring practices and IT infrastructure. Conversely, AI pressures will compound operational gaps and procedural dysfunction. A small institution with strong culture and clear decision rights can punch above its weight; a larger institution with diffuse authority and weak processes will find AI sharpens fault lines leadership has deferred addressing.

AI adoption is outpacing institutional understanding. The surveys summarized above describe a field with inconsistent norms and organizational response. Large numbers of students, faculty, staff, and leaders are using AI in real academic and operational settings, but shared sense making has not kept pace. Campuses are operating with inconsistent expectations and unresolved questions about what requires protection, what can change, and who decides. That gap challenges decision structures, role definition, and professional identity. Some students and personnel will experience this as deeply destabilizing. This is a social transition as much as a technology transition.

AI adoption is jagged. Different parts of the institution are trying to solve different problems at different speeds. The surveys suggest the uneven pace results less from a lack of awareness and more from a lack of institutional mindset: a shared sense of how to make decisions related to AI that are mission aligned and that account for second- and third-order effects across the organization.

Institutions already have significant new technology risk exposure. Tools are in place that institutions did not choose, train for, or standardize. Few institutions are measuring ROI or improvements in learning. Selecting and implementing tools effectively requires data infrastructure, controls, and staff capacity that are not yet in place. Risk may be greatest in areas overlooked in the dominant conversations, such as financial aid offices that handle sensitive data and make high-stakes decisions.

Teaching and learning

Faculty concerns are broader and deeper than integrity alone. Faculty feel AI exposes how many current instructional and assessment practices reward output rather than learning. It raises theory-of-learning questions as much as classroom management questions. Respondents in the surveys anticipate significant change in how students complete academic work, how faculty evaluate that work, and how institutions signal the value of a degree. Some faculty see this disruption as an opportunity to clarify principles and to sharpen course design and teaching practices. Many feel an urgent requirement to communicate expectations about knowledge, judgment, and responsible use. They are looking to their institutions to provide faculty development for course redesign and to develop critical AI literacy.

Students are not necessarily naive users of AI. The surveys show students recognize the risks of AI to their academic development, and they make active, situational decisions about how to use it. They tend to position AI as support rather than replacement. They describe using AI for drafting, brainstorming, editing, and research support. Many students express concern that AI will become a shortcut that limits their critical thinking or developing their own perspectives and voices. That does not mean, however, that students have stable frameworks for AI use or that their caution reliably shapes their behavior. Under pressure or when expectations are unclear, students default to what helps them efficiently complete graded assignments. Educators have an opportunity to convert student awareness and inquiry into productive academic norms.

Source: How AI Is Changing—Not ‘Killing’—College, Inside Higher Ed

AI will amplify equity gaps. Though many students are experienced with AI, they are not a monolith. The reports above, including those on surveys of both current and prospective college students, show they will arrive in and progress through college with varied AI experiences. Those differences will run along multiple axes: socioeconomic background, access to premium versions of AI tools, disciplinary practices, and prior instruction. Students are developing varying belief systems about authorship, effort, and learning. Assumptions that all students are tech savvy flattens a population whose preparation will differ significantly by background and context. Equity demands that advising, course design, and academic expectations account for a range of experiences and readiness.

In short — Individual actors are not waiting for consensus

AI summons existential conversations about purpose, pedagogy, and institutional identity. Those conversations are necessary, but campuses cannot wait for a philosophical consensus before acting. The work requires parallel tracks: making space for deeper questions while acknowledging that AI will keep imposing its influence without regard to a community’s timeline for discussions. Leaders who hope to settle every foundational question before moving forward will find the environment has moved without them.

IV. Implications by stakeholder group

President and cabinet. Only 55 percent of presidents in the Inside Higher Ed survey say their institution is responding appropriately to AI. The cabinet needs to determine who has authority to set AI standards in academic contexts and operational contexts and where those two domains require coordination before they collide. The four questions in Section V of this report offer a shared orientation that can cross those lines. Cabinet members who adopt them as a common frame give the institution something more durable than a policy: a consistent way to evaluate individual decisions before the policy catches up.

Provost, deans, and academic program design. The AAC&U/Elon University and College Board surveys describe faculty experiencing a disruption to what counts as student work, what assessment measures, and whether current course designs reward output or development. Faculty redesigning courses without institutional support will do so inconsistently. Academic affairs leadership needs a guiding framework to build structured faculty development that is coherent across departments.

Institutional research, analytics, and the registrar. A notable absence in the surveys reviewed above is institutions measuring what AI actually changes in learning outcomes, retention, or academic performance. Data is not informing decisions about AI policy and tool adoption. IR and the registrar’s office have a shared interest in defining what consistent, AI-aware academic standards look like before variation hardens into inconsistency that is difficult to audit.

Advising and career services. The Pew Research Center and College Board surveys show that incoming students will arrive with sharply different AI experiences shaped by prior instruction, family resources, and school policies. Students who used AI extensively in high school with no instructional scaffolding and students who had thoughtful guidance present different challenges for academic integration. Meanwhile, employers are developing their own AI expectations, and students need help understanding which uses of AI in professional settings signal competence. Advising and career services need clear institutional positions to communicate, and they need faculty and academic affairs engaged in developing those positions with them.

Health, counseling, and cultural programming. Students experiencing financial stress, mental health challenges, or identity-related marginalization may reach for AI as a substitute for support they cannot easily access, or they may encounter AI-assisted services that handle their cases without the relational attention those cases require. Health, counseling, and cultural programming staff are often the first to see when institutional processes fail students. They should be included in any campus AI task force, because their vantage point identifies equity risks not visible from elsewhere.

Finance, budget, and IT leadership. The Ellucian report describes AI use in financial aid, enrollment, and IT as already at saturation among administrative staff. CFOs and CTOs need to know whether those tools were selected through any institutional process, whether they involve student data, and what the liability exposure is if performance or privacy problems emerge. AI tools adopted through deliberate institutional process carry implementation and training costs that need to show up in budget planning.

Faculty development. Centers for teaching and learning are positioned to convert individual effort into institutional knowledge if they are clear about what they are trying to accomplish. A center that builds workshops, peer learning communities, and redesign consultations around a limited set of questions like those in Section V gives faculty a common language without demanding consensus on contested pedagogical questions. The goal is shared vocabulary and deliberate reflection across departments that are currently operating in isolation.

“Middle management.” Leaders in department chair, program director, and dean roles are being asked to make AI-related decisions without shared frameworks, consistent information, or organizational cover for uncertainty. Leadership development for this crucial “interpretation” layer of the institution should prioritize four capabilities: holding institutional uncertainty openly; maintaining space for human judgment; adopting an institutional mindset; and gaining the financial, regulatory, and cross-functional literacy to evaluate AI use cases against mission and constraints.

Marketing and communications. The surveys offer something more useful than narratives of alarm about academic integrity: evidence that students, faculty, and leaders all care about learning, that the field has not surrendered its educational commitments, that meaningful questions remain genuinely open, and that creative scholars are engaging students in exciting new intellectual projects presented by AI. Institutions should acknowledge the disruption while articulating principled, mission-grounded frameworks for navigating it.

Workforce and L&D partners. Employer partners and workforce development organizations share the equity exposure the surveys describe. Students exiting workforce programs with habits formed under pressure rather than principle create downstream challenges for employers and L&D professionals who assume a baseline. Partners that co-develop AI literacy expectations with institutions, rather than waiting for graduates to arrive, are better positioned to shape what those expectations look like.

Philanthropic partners. Nonprofits have an opportunity to make field-building investments that develop shared language and cross-functional coordination, grow understanding of the impact of scattered activities, and promote coherent institutional practices. Philanthropic organizations with a sector-wide perspective are also positioned to support institutions and units where AI risks magnifying existing inequities in advising, financial aid, teaching support, and student preparation. Colleges and universities need help building the faculty development, student-support capacity, and data literacy that create conditions for better judgment.

V. The leadership demand: A common frame for an unsettled environment

College and university communities have an opening to consolidate and raise the quality of conversations and activity already underway. The groups represented in these surveys care intensely about learning, integrity, authorship, fairness, and preparation. The argument on campuses remains fundamentally about education, mission, and strategy. However, definitive responses to the questions being raised are not available as quickly as the environment demands. People across the institution are feeling simultaneous pressures to act and to engage in protracted information gathering and planning.

Leaders can respond in the short term by articulating a stable orientation and productive guiding questions like the four below. These questions are designed to function at every level of the institution, from an individual staff member evaluating a new workflow to a cabinet discussing institutional risk to a faculty member redesigning an assignment. They do not resolve the uncertainty, but they give people a disciplined way to move through it and avoid paralysis.

1. How does this use of AI preserve human judgment?

AI can accelerate processes, surface patterns, and generate drafts. Increasingly, it can act on its own toward a stated goal without guidance. The question for any use case is where the humans involved retain the capacity and the responsibility to evaluate and decide. A financial aid office using AI to flag application anomalies preserves judgment if counselors review and act on the flags. It erodes judgment if the system makes determinations that staff simply accept. A student using AI to brainstorm an argument preserves judgment if the student evaluates and develops the ideas. The distinction applies across every function: advising, hiring, assessment, communications, program review, research, teaching, and learning.

2. Does this use of AI respect human processing speed?

AI produces output faster than humans can evaluate it well. That gap is where quality erodes. When AI accelerates output, workers tend to expand their scope, blur work boundaries, and manage more simultaneous tasks, often without realizing the cumulative effect on their judgment. (We are influenced here by AI Doesn’t Reduce Work—It Intensifies It by Aruna Ranganathan and Xingqi Maggie Ye in The Harvard Business Review (February 9, 2026.)) Careful people, working in good faith, can find themselves processing AI output at a pace that doesn’t leave room for the judgment their roles require. A faculty member prompting an AI tool to generate course materials; an advisor using AI to draft outreach to students at risk of stopping out; a financial aid officer using AI for ideas to optimize yield: In each case, the speed of production needs to be matched by the time and cognitive space to evaluate what was produced. Institutions that adopt AI primarily to accelerate work without addressing the processing-speed question are likely to find quality trade-offs showing up later in enrollment, retention, learning outcomes, and morale.

3. How does this use of AI respect human privacy and dignity?

AI applications in higher education touch student records, health information, financial data, and employment decisions. That data needs to be handled securely, but privacy should be paired with dignity. When AI tools profile student behavior to predict attrition or flag risk, institutions should examine whether those interventions treat students as agents or as objects of management. When AI assists in hiring decisions or personnel evaluations, the user needs to ask whether the people affected would recognize the process as fair and respectful if they could see it.

4. How does this use of AI align with the institution’s mission and its understanding of learning?

Every institution holds commitments, sometimes implicit, about what education is for, what counts as knowledge, and what the relationship between teacher and student should look like. AI decisions that proceed without reference to those commitments risk optimizing for efficiency at the expense of purpose. A center for teaching and learning helping faculty redesign courses around AI should ground that work in the institution’s own educational philosophy. An enrollment office adopting AI-driven communications should test whether the resulting messages reflect the institution’s voice and values. This question forces alignment between operational decisions and the educational identity the institution claims.

VI. Strategic conversations

The questions in Section V help individuals and teams make better decisions inside an uncertain environment. The questions below ask whether the institution is building the capacity to learn from those decisions over time.

For cabinet discussions

Which AI decisions currently being made at the department or office level require cabinet-level coordination, and what is the cost of continuing to let those decisions happen informally?

Where is individual AI experimentation generating useful institutional knowledge, and where does that knowledge disappear when people change roles or leave?

The surveys show that operational AI adoption is running ahead of academic adoption. Does that asymmetry hold at our institution? Does it reflect a deliberate institutional choice? If not, who is responsible for closing it?

Which functions carry the most AI risk right now, and who is accountable for that exposure?

What would the institution need to measure to know, two years from now, whether its current AI posture served its educational mission or undermined it?

For board discussions

The surveys show that only 55 percent of presidents believe their institution is responding appropriately to AI. Do you have a good sense if your institution’s leadership places you in that group and why?

Leadership requires the steadiness to navigate uncertainty about AI and the analytical skills to evaluate AI decisions against mission rather than peer behavior. How is the board assessing whether this institution has that capacity at the leadership level?

If the institution’s AI posture over the next three years drifts toward efficiency and away from educational mission, does it have the governance mechanisms to make timely decisions and correct that drift?

Institutions that lack data infrastructure, decision-making processes, and staff capacity to implement AI responsibly accumulate liability they have not priced in. What does the board currently know about this institution’s readiness in those areas?

Board members make decisions in their own organizations about when to adopt new technology, how to manage the risks, and how to know whether it is serving the organization’s mission. What would it take to bring that same discipline to how this board thinks about AI at this institution?

Can we help?

Ilene Crawford works directly with presidents, cabinets, provosts, and their teams navigating the same coordination problems the surveys describe. Connect with her via her website or LinkedIn.

Robert McGuire develops briefings, trend reports, and playbooks that translate sector signals into actionable insights for universities, nonprofits, and edtech companies. Connect with him via his website or LinkedIn.